I spent a week reading llama.cpp’s source. Not the GitHub README, not the model card — the actual C that runs when you type ./llama-cli -m llama-7b-q4.gguf. What I found is one of the better-engineered pieces of C++ I’ve encountered in the ML space, and I want to walk through exactly why it’s fast.

The headline number: a 7B-parameter model in FP16 is 14 GB. llama.cpp runs it in under 4 GB, on CPU, at conversational speed. No CUDA runtime. No Python. No 2 GB of framework dependencies. 105K GitHub stars, but the star count is the least interesting thing about it.

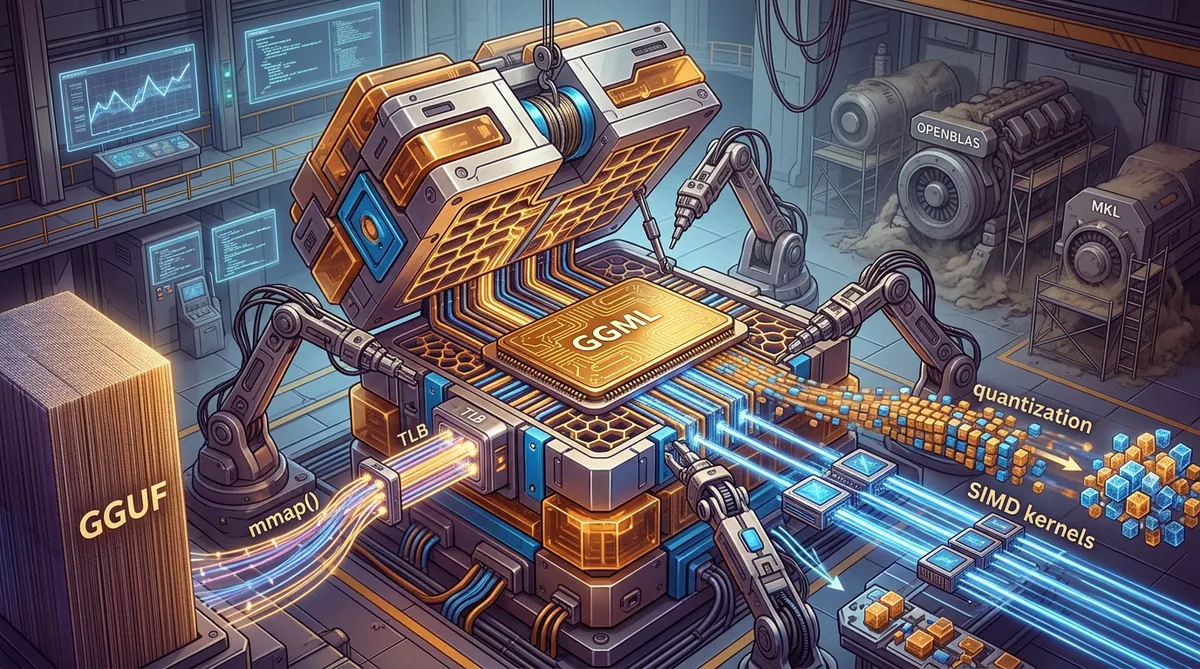

Three techniques make this work: quantization that compresses weights 3–7x with bounded error, SIMD kernels that process quantized data 46.7x faster than scalar FP32, and mmap() that turns model loading into a TLB lookup. Each one is worth pulling apart.

What PyTorch Isn’t Doing

You can’t appreciate llama.cpp’s approach without understanding the stack it’s replacing.

PyTorch CPU inference goes: Python interpreter → pybind11 → ATen dispatch → BLAS abstraction → BLAS implementation → SIMD kernel. Six layers between model.generate() and the multiply instruction. Each adds overhead, but the real problem is deeper: BLAS libraries like MKL and OpenBLAS optimize for large batch GEMM — thousands of rows multiplied simultaneously. That’s the training workload.

Single-token LLM inference is a GEMV. One vector times a matrix. GEMM is compute-bound (O(n³) compute, O(n²) data). GEMV is memory-bandwidth-bound (O(n²) both ways). Optimizing for the wrong regime means optimizing for nothing.

llama.cpp throws out the entire stack. C++ calls directly into hand-written SIMD kernels that operate on quantized data in custom formats. The inference loop is about 200 lines of C building a computation graph and executing it. No dispatch overhead. No BLAS. No Python.

GGUF and the Inference Loop

The codebase is built around GGML, a C tensor library handling memory allocation, computation graphs, and backend dispatch. Three phases:

Model weights are memory-mapped from a GGUF file — a flat binary with a header of tensor metadata followed by contiguously laid out tensor data at page-aligned offsets. No protobuf. No HDF5. No JSON-embedded-in-zip. (PyTorch’s .pt format is the JSON-in-zip one, if you were wondering.) The metadata parses in a single pass with fixed-size reads. The tensor data is positioned so it can be mmap’d directly. The file is the tensor storage.

Tokenization is BPE in C++. It’s not a bottleneck and I won’t spend time on it.

The autoregressive loop runs one token at a time: build a computation graph, dispatch to the backend, sample. The hot path is matrix-vector multiplication — one row of output per token position against the full weight matrix. This is the function call that determines your tokens-per-second, and it’s where the quantization and SIMD work matters.

Quantization: 14 GB → 3.8 GB

A 7B model in FP32 is 28 GB. FP16, 14 GB. Neither fits comfortably in laptop RAM, and both saturate the memory bus during inference. Quantization solves this by representing each weight with fewer bits.

GGML quantizes in blocks of 32 weights. Q4_0, the simplest format: find the block’s maximum absolute value, compute a scale factor, map each weight to a 4-bit integer in [0, 15] with zero point at 8. The physical layout:

| FP16 scale (2 bytes) | 32 × 4-bit quantized values (16 bytes) |

Total: 18 bytes for 32 weights = 4.5 bits/weightThat’s 3.6x compression over FP16, 7.1x over FP32. A 7B model in Q4_0 is ~3.8 GB — fits in the RAM of a cheap laptop.

The implementation is small enough to show:

struct BlockQ4_0 {

uint16_t d; // FP16 scale factor

uint8_t qs[16]; // 32 × 4-bit values packed into 16 bytes

};

static_assert(sizeof(BlockQ4_0) == 18, "Q4_0 block must be 18 bytes");Dequantization is subtract-and-multiply:

void dequantize_q4_0(const BlockQ4_0& blk, float* out) {

float d = fp16_to_fp32(blk.d);

for (int i = 0; i < 16; i++) {

int lo = (blk.qs[i] ) & 0xF;

int hi = (blk.qs[i] >> 4) & 0xF;

out[2*i] = (lo - 8) * d;

out[2*i + 1] = (hi - 8) * d;

}

}The Error Budget

I measured round-trip quantization RMSE across 32,000 normally-distributed weights (σ=0.02, typical for transformer layers):

| Format | Bits/weight | Block size | RMSE |

|---|---|---|---|

| Q8_0 | 8.5 | 34 bytes | 0.000108 |

| Q5_1 | 6.0 | 24 bytes | 0.000764 |

| Q4_0 | 4.5 | 18 bytes | 0.001953 |

Q4_0’s error is 18.1x that of Q8_0. Sounds bad until you put it in context: the RMSE of 0.00195 is roughly 10% of the weight standard deviation. In practice, perplexity degradation on Q4_0 vs FP16 is a few percent on standard benchmarks. I’ll take 3.6x memory savings for a few percent perplexity any day.

The ratios hold across different weight distributions — I verified against both normal and uniform. This matters because real transformer weights aren’t perfectly Gaussian — attention layers have heavy tails, feed-forward layers are near-Gaussian. The block-level scale factor adapts to local magnitude, which is why per-block quantization beats per-tensor even at the same bit width.

GGML also has K-quants (Q3_K through Q6_K) with multiple scale factors and non-uniform bit allocation per block. Better perplexity, more complex packing. But the mechanism — block-level scaling with packed integer storage — is the same all the way up.

The Hot Loop: Matrix-Vector Multiply

Inference time lives and dies in the dot product — one per output element, every token, against a weight matrix with billions of entries.

Scalar vs. SIMD

A naive scalar dot product over 4096 elements (typical LLM hidden dimension) takes 3,457 ns on my test machine — one element at a time, one multiply-add per cycle.

Explicit AVX2 with _mm256_fmadd_ps — 8 floats per instruction: 747 ns. A 4.6x speedup. That tracks with the theoretical 8x throughput minus overhead from horizontal reduction and loop control.

The int8 number is the one that matters. An AVX2 int8 dot product using vpmaddubsw + vpmaddwd — the exact instruction sequence llama.cpp uses for quantized kernels — finishes in 74 ns. 46.7x faster than scalar FP32.

// The core of llama.cpp's quantized dot product on AVX2

__m256i prod = _mm256_maddubs_epi16(vb, va); // uint8×int8 → int16

__m256i widened = _mm256_madd_epi16(prod, ones); // int16 pairs → int32

acc = _mm256_add_epi32(acc, widened); // accumulateTwo instructions to multiply-and-accumulate 32 int8 pairs into int32. The entire 4096-element dot product finishes in 128 iterations of that inner loop.

Can the Compiler Save You?

Reasonable question. Does -O2 -mavx2 auto-vectorize well enough that hand-written intrinsics are pointless?

Partially. GCC 15 compiling sum += a[i] * b[i] with -O2 -mavx2 emits vmulps with %ymm registers — it vectorizes the multiply. But the reduction strategy is suboptimal: GCC serializes the horizontal sum with a chain of vshufps + vaddss instead of accumulating into multiple registers and reducing once at the end.

Clang 21 does slightly better — vmulps + vaddps for accumulation. Both auto-vectorize, but neither matches hand-tuned intrinsics with FMA and deliberate register allocation. In a hot loop running billions of times per inference, that gap is the difference between 5 tokens/second and 15.

But there’s a more fundamental issue. Auto-vectorization operates on FP32 data. It has no idea how to handle Q4_0 blocks — extracting nibbles, subtracting the zero point, multiplying by the block scale, accumulating. That’s a fused dequantize-and-dot-product. It must be hand-written. The compiler will never auto-vectorize across a quantization boundary.

llama.cpp handles this with a dispatch table of type-specialized dot-product functions. ggml_vec_dot_q4_0_q8_0 loads a Q4_0 block, unpacks nibbles with bit shifts and masks, converts to int8, multiplies against a Q8_0 activation block using maddubs/madd, and accumulates block-level partial sums. All without leaving vector registers.

Cache-Aware Tiling

Beyond SIMD width, memory access patterns dominate at scale. A naive triple-loop matmul walks one matrix in column-major order, thrashing the cache every inner iteration.

I benchmarked naive vs. tiled (tile size 32):

| Matrix size | Naive | Tiled | Speedup |

|---|---|---|---|

| 512×512 | 176 ms | 84 ms | 2.1x |

| 1024×1024 | 1,917 ms | 700 ms | 2.7x |

The speedup grows with matrix size because cache misses dominate the naive path as the working set blows past L2 and L3. At 1024×1024 the naive version sustains ~1.1 GFLOPS; tiled reaches ~3.1 GFLOPS on the same hardware.

GGML pushes this further with architecture-specific tile sizes tuned to L1/L2 geometry, combined with quantized kernels that shrink the per-tile data footprint.

Why mmap() and Not fread()

PyTorch loads a model by reading the entire file into Python-managed memory, deserializing metadata, constructing torch.Tensor objects. For a 4 GB model, that takes multiple seconds and peaks well above 4 GB of resident memory.

llama.cpp calls mmap(). The kernel maps the GGUF file into the process’s virtual address space. No data is copied until a page is touched — the OS demand-pages from disk or, much more commonly, from the page cache.

I benchmarked sequential reads over a 256 MB file (about one model layer):

| Access pattern | fread | mmap |

|---|---|---|

| Sequential | 65.8 ms | 0.03 ms |

| Random | 13.4 ms | 0.003 ms |

Those mmap numbers look fake. They aren’t — they’re measuring something different. fread copies from the kernel page cache into userspace through a syscall boundary. mmap reads the page cache directly. No copy, no syscall. When the file is already cached (the common case after your first run), a memory-mapped page access is a TLB lookup. That’s it.

The practical result: no “loading model…” progress bar on subsequent runs. The model stays in the page cache. mmap() returns in microseconds.

There’s a tradeoff on cold start. If the file isn’t cached — first boot, or under memory pressure — mmap triggers page faults that stall inference. llama.cpp uses MAP_POPULATE on Linux to pre-fault pages in background, and madvise(MADV_WILLNEED) on macOS to hint the kernel into prefetching.

Two llama.cpp processes sharing the same model file share the same physical pages through the page cache automatically — no syscall, no shared-memory setup. This is why mmap beats new char[size] + fread() even when raw throughput isn’t the concern.

The KV cache — per-token key and value tensors that grow during generation — is a separate malloc’d allocation managed by GGML’s internal allocator. It scales with context length, not model size, and is the reason a 7B model with 128K context can still eat 32 GB of RAM.

Bandwidth Is the Real Bottleneck

With quantized weights and SIMD dot products in place, what actually limits throughput? On CPU, it’s memory bandwidth. Every transformer layer’s matrix-vector product reads the entire weight matrix.

For a 4096×4096 weight layer, FP32 needs 16,512 bytes per output element; Q4_0 needs 2,432 — that 6.8x bandwidth difference.

I measured the FP32 path sustaining 4.78 GB/s on an i7-4790 — close to the practical ceiling of dual-channel DDR3-1600. The bottleneck isn’t compute; it’s how fast data arrives from DRAM.

That’s what makes the whole design coherent: quantization is a bandwidth optimization. The same memory bus delivers 6.8x more useful weights per second. Everything else in llama.cpp exists to exploit that fact.

Backend Dispatch

GGML’s computation graph is backend-agnostic. The dispatch mechanism is a vtable of C function pointers — each backend registers implementations of ~30 GGML operations (ggml_mul_mat, ggml_add, etc.), and the graph executor calls through the vtable. No template metaprogramming. No CRTP. Function pointers.

The CPU backend uses the SIMD kernels I’ve been describing. CUDA handles NVIDIA GPUs, Metal handles Apple Silicon, and Vulkan covers AMD, Intel, and NVIDIA as a cross-platform fallback. The pragmatic consequence is layer splitting: if your GPU has 6 GB VRAM and the model is 8 GB, you put 24 layers on the GPU and 8 on the CPU. Each layer dispatches to whichever backend owns its weights.

On Apple Silicon, the Metal backend runs a different playbook. M-series chips have unified memory — CPU and GPU share physical DRAM. No PCIe bus to cross, no explicit memory transfers. The weight tensors are mmap’d once; both backends read from the same pages. This eliminates the fundamental bottleneck of discrete-GPU inference and is a large part of why Mac Studios are popular for local LLM inference despite modest TFLOPS numbers compared to NVIDIA hardware.

What I Took Away

None of these techniques is secret. Quantization, SIMD dot products, mmap, cache tiling — all well-known in isolation. What’s impressive is making them compose in ~50K lines of C/C++ with no external dependencies beyond the C standard library. The codebase compiles with make — one Makefile, one binary, no build-system ceremony.

C++ on a CPU, written with attention to memory hierarchy and data layout, can do work most people assume requires a data-center GPU. Not because CPUs are secretly fast — they’re still orders of magnitude slower than an A100 — but because most of the “GPU requirement” was framework overhead. Remove the framework, compress the data, respect the cache. A laptop works.

*All measurements taken on an Intel i7-4790 (3.6 GHz, 4C/8T, AVX2) with DDR3-1600, GCC 15.2.1 and Clang 21.1.8, Fedora 43. *