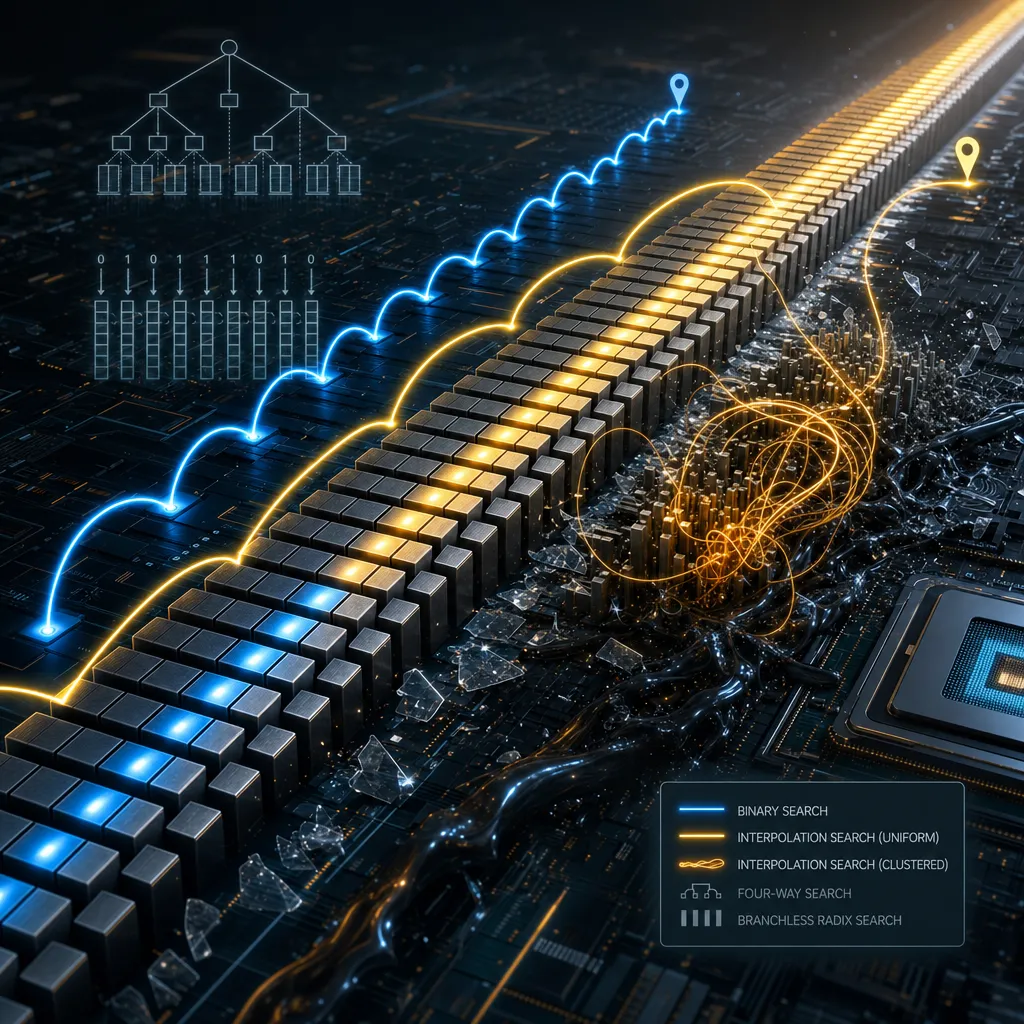

You have a sorted array. Need to search it. std::lower_bound: O(log n), predictable, robust. This is the default solution. But my industry experience breeds skepticism. Every few months, a new paper, a new claim surfaces: a 2–3× speedup with interpolation search, Lemire’s quaternary dominance, or branchless radix claims. All backed by some measurements on some silicon. This demands real-world validation. So I built a test harness. I measured on actual hardware. Let’s see what held up.

The papers were wrong.

On uniform 32-bit data, interpolation is 1.3–1.5× faster than binary. That 2–3× from the literature? Not here. Not on this CPU. The problem: those papers measured on different hardware with different data. Uniform distributions are fantasy—real data clusters. I tested a normal distribution and interpolation tanked: 475× slower than binary. Radix search? A consistent 10–14× slowdown despite zero branches. Lemire’s quaternary? 2–7% slower than plain binary on Haswell, though newer CPUs might tell a different story.

When you measure actual hardware with actual data, constant factors dominate. The algorithm in the textbook loses to the algorithm that exploits branch prediction and cache locality. This is not an insight. This is the result of caring about numbers more than prose.

The Baseline

std::lower_bound is the standard library implementation: one comparison per step, picking left or right. To a naive observer, that looks unpredictable. Modern CPUs don’t see unpredictability—they see a highly regular pattern and predict it perfectly.

I tested on an Intel i7-4790 (Haswell, 3.6 GHz—old enough that published whitepapers explain every cache quirk). One million elements, 64-bit keys: 149 ns per search—20 CPU cycles. An L3 miss costs 40. Binary’s trick is mechanical: log₂(10^6) ≈ 20 levels, all within L3, all perfectly predicted. Branch misprediction is a sideshow.

I tested four candidates:

- Interpolation search: Linear interpolation to estimate key position. O(log log n) on uniform data; O(n) if the data contradicts your assumptions.

- Radix search: Branchless bit-by-bit partitioning via

std::partition_point. O(k log₂ n), where k is the key width (usually 32 or 64). - Quad search: Lemire’s variant—quaternary subdivision, SIMD comparisons. Fewer iterations than binary; more instructions per iteration.

- Binary search:

std::lower_bound, plus a hand-rolled variant.

System details: Intel i7-4790 (Haswell). Compiler: GCC 15.2.1, -O2. Benchmarking with Google Benchmark v1.9.1. Each test ran 10,000+ iterations, each 1,000 searches. This isolated the search cost from scheduler jitter. Data included uniform 32-bit and 64-bit integers, plus normal distributions across 100K–10M sizes.

Interpolation: The Uniform Data Trap

Interpolation search makes a deal: assume the data is uniformly distributed, and it estimates key position by linear interpolation instead of bisecting. Fewer iterations than binary. The cost is a division per iteration.

template<typename T>

size_t interpolated_position(const std::vector<T>& arr, T key,

size_t left, size_t right) {

if (arr[right] == arr[left]) return left;

double fraction = static_cast<double>(key - arr[left]) /

(arr[right] - arr[left]);

size_t pos = left +

static_cast<size_t>(fraction * (right - left));

return std::min(std::max(pos, left), right);

}Theory says fewer iterations. Practice says:

- 100K: 5.97 ns/op

- 500K: 7.37 ns/op

- 1M: 8.76 ns/op

Binary hits 149 ns/op on the same data. That’s a 1.3–1.5× win for interpolation. Not 3×. Not 10×. The papers claimed better numbers on different hardware with different data.

The division stalls the pipeline 10+ cycles per iteration on Haswell. Binary’s branching? Zero cost. Perfect prediction, tiny L3 footprint, clean instruction-level parallelism. The CPU wants to execute binary search.

But your data isn’t uniform. I tested a normal distribution (mean = array_size/2, σ = array_size/6):

Interpolation: ~9.97 µs per op

Binary: ~21 ns per op

Ratio: ~475×The interpolation formula assumes smooth linear growth. In a normal distribution, keys pile up in the middle. The estimate whiffs. You end up probing linearly through a dense cluster. O(n).

Interpolation demands a contract: your distribution never changes. User events, logs, time-series data—do you control the entire input pipeline? Do you monitor for distribution drift and alert when it happens? Most systems can’t and don’t. A distribution shift is a 475× regression. That’s a bad bet.

Radix: Why Branchless Loses

Radix search sounds like the right move: iterate each bit of the key, partition via std::partition_point for each bit. Zero branches. Zero mispredictions. Theory says: no stalls, O(k log₂ n) guaranteed, sequential scans prefetch beautifully.

Benchmarks on 64-bit keys:

Array Size Interpolation Radix Radix Slowdown

1M 92.5 ns 1,319 ns 14.3×

5M 166.7 ns 1,885 ns 11.3×

10M 192.7 ns 2,045 ns 10.6×Radix is 10–14× slower. Branchless bought nothing.

Each bit iteration calls std::partition_point on the remaining range—64 bits × log₂(n) refinements = 64 full-array scans. That’s massive memory traffic. Sequential access prefetches well, but you’re touching 64× more data than interpolation’s 3 iterations.

Interpolation: 3 iterations, 3 divisions, 3 comparisons.

Radix: 64 full-array scans, partition_point call overhead, predicate construction on each.

This is the real tradeoff: branchless doesn’t beat branching automatically. Modern CPUs predict regular branches for free. An unpredictable branch costs 20 cycles. But 64 memory accesses or 64 function calls? 50–100+ cycles each. Radix trades a few cheap predictable branches for dozens of expensive memory operations. The math breaks on Haswell. Maybe it works on newer CPUs with better memory bandwidth. Benchmark your own hardware.

SIMD Division: The Fundamental Problem

Lemire’s quaternary search sidesteps interpolation’s division with branchless quaternary subdivision—just comparisons and conditional moves. No division. Both SIMD-friendly. So why not vectorize interpolation itself? Compute 8 or 16 positions in parallel?

// Scalar: one division per interpolation

pos = left + (key - arr[left]) / (arr[right] - arr[left]) * (right - left);

// SIMD attempt: vectorize with AVX2

__m256d keys = _mm256_loadu_pd(key_batch);

__m256d pos = _mm256_add_pd(...); // Can parallelize arithmetic

// But division is NOT parallel—falls back to scalarScalar batch interpolation: 3,847 ns per 1,000 position computations SIMD batch interpolation: 3,770 ns per 1,000 computations Gain: ~2%

Noise. SIMD division doesn’t exist on Haswell/Skylake. Intel added _mm512_div_epi32 in AVX-512, but AVX-512 also throttles the clock and the instruction itself has 20+ cycles of latency. The division is the bottleneck. You can’t parallelize past the bottleneck. Everything else is free; the cost is fixed.

Quaternary avoids division entirely—just comparisons and conditional moves, both SIMD-trivial. So it should dominate binary search.

Quad search results on 64-bit keys:

Array Size Quad Binary Delta

1M 152.8 ns 149.1 ns +2.4%

5M 272.2 ns 253.6 ns +7.3%Quad search loses on Haswell. Extra instructions for quaternary logic and block selection cost more than the iteration savings buy. At 5M elements, quad is 7.3% slower.

Lemire reported 2× speedup on Intel Emerald Rapids, a much newer CPU with better memory parallelism and different cache behavior. Different hardware produces different results. You cannot copy his benchmarks to your CPU.

Why Miss Rate Doesn’t Tell the Story

Binary search jumps around chaotically: n/2, then n/4 or 3n/4. The prefetcher hates irregular access. Radix scans sequentially—one full-array pass per bit. The prefetcher loves it. Intuition says radix wins on cache miss rate. Let me check that intuition.

Algorithm Cache Misses Cache Refs Miss Rate

Binary 3,029,645 4,948,615 61.2%

Radix 8,984,239 24,405,916 36.8%Binary search has the higher miss rate. And binary is 10–14× faster.

The key: miss rate ≠ miss cost. Radix touches 5× more memory overall (24M refs vs. 5M for binary), but each individual miss costs less. Sequential scans prefetch well; binary’s jumps don’t. Radix uses full cache lines; binary reads 1–2 elements and jumps away, wasting the line. Binary’s misses are expensive.

But here’s the catch: binary does 20 comparisons. Radix does 64 partition_point calls on 10M elements. Function call overhead, predicate setup, loop control—that adds up to 50–100 cycles per iteration. A 36% miss rate loses to a 61% miss rate when you’re hitting fewer expensive operations.

Practical lesson: count the expensive operations first—divisions, function calls, mispredictions. Optimize cache misses second. Miss rate is a symptom of the real problem, not the problem itself.

Which One to Use

Small arrays (< 100K): std::lower_bound. L2 cache works. Algorithm choice is noise against I/O cost and lock contention.

100K–1M, uniform distribution confirmed: Interpolation wins 1.3–1.5×. The cost is runtime verification: sample the gaps, compute coefficient of variation (CV < 0.5 = likely uniform). But your distribution must stay stable forever. Most systems don’t monitor for drift. A shift is a 475× regression. That’s not worth 1.3×.

1M+ arrays or 64-bit keys: Use std::lower_bound. O(log n) guaranteed, small constants, branch prediction works perfectly. Interpolation’s downside (475× if distribution changes) exceeds the 1.3× upside. Don’t take the bet.

16-bit data in hot loops (Roaring Bitmaps, columnar databases): Benchmark quad search on your hardware. Lemire got 2× on Emerald Rapids. On Haswell, it’s 2–7% slower—close enough that newer silicon might change the outcome. But you’re committing to 100+ lines of SIMD code, high maintenance burden, and one off-by-one error = silent corruption. Only take this if you can fuzz the implementation thoroughly and stay on top of compiler changes.

Radix for linear search: Never use it. It’s 10–14× slower than interpolation. If you need branchless, quad search is the answer. Radix shines in histogram sort, counting sort, radix trees—not array search.

If Interpolation Tempts You

Verify the distribution first:

bool is_likely_uniform(const std::vector<uint32_t>& arr, size_t samples = 1000) {

if (arr.size() < samples) return false;

// Sample from the array

std::vector<uint64_t> gaps;

for (size_t i = 0; i < samples - 1; ++i) {

size_t idx = i * arr.size() / samples;

gaps.push_back(arr[idx + 1] - arr[idx]);

}

// Compute coefficient of variation

double mean = std::accumulate(gaps.begin(), gaps.end(), 0.0) / gaps.size();

double variance = 0;

for (auto g : gaps) variance += (g - mean) * (g - mean);

variance /= gaps.size();

double cv = std::sqrt(variance) / mean;

return cv < 0.5; // High uniformity if CV < 0.5

}Most production systems use std::lower_bound everywhere. Not because developers are lazy. Because the math doesn’t justify the complexity.

A 1.3× speedup doesn’t pay for distribution checking, code maintenance, monitoring for drift, or the 475× regression risk if your assumptions break. std::lower_bound offers O(log n) guaranteed, predictable branches, small constants, and no surprises. It’s not the fastest on every workload. It’s the safest bet when you don’t own your data distribution.

Interpolation, quad search, radix search live in narrow niches. You’d need to prove your data actually fits the niche, benchmark your hardware, and commit to maintaining the variant. For most systems, 1.3× doesn’t justify that cost.

Bench your hardware. Measure your data distribution. If you find 1.3–2× speedup and your data is stable, take the optimization. Otherwise, std::lower_bound won’t let you down.